OpenAI's unCLIP Text-to-Image System Leverages Contrastive and Diffusion Models to Achieve SOTA Performance | Synced

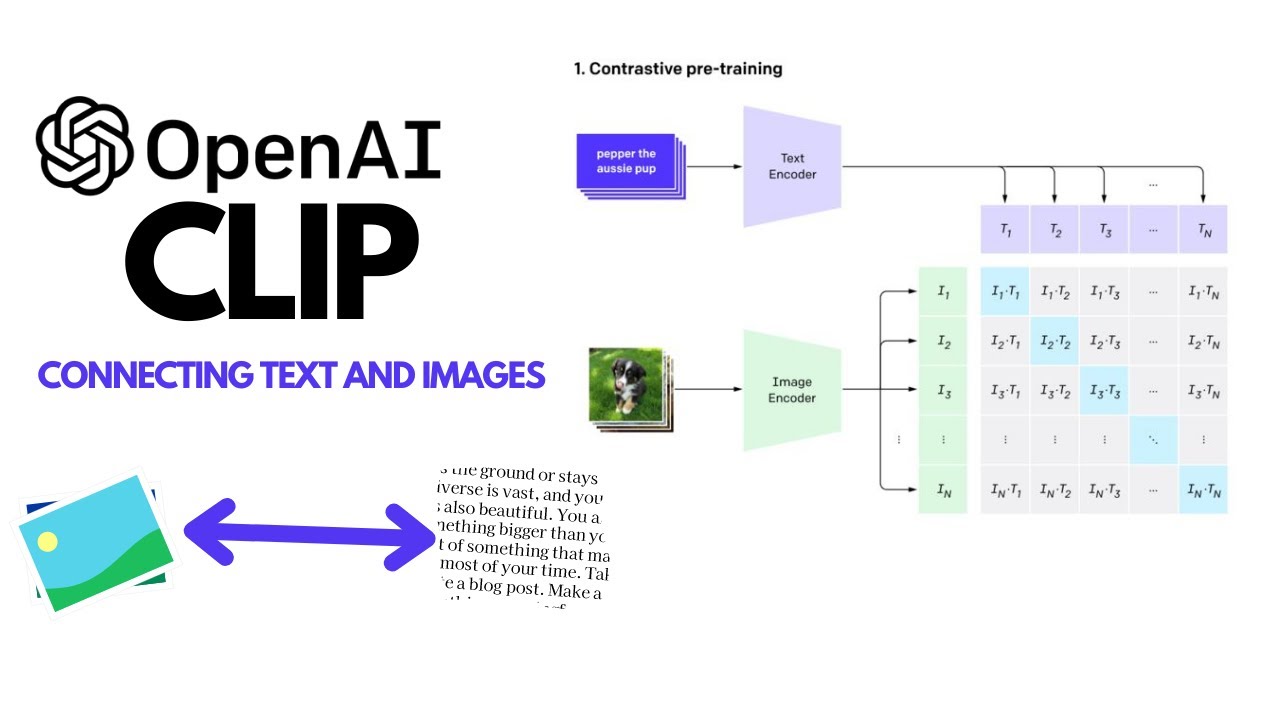

OpenAI's CLIP Explained and Implementation | Contrastive Learning | Self-Supervised Learning - YouTube

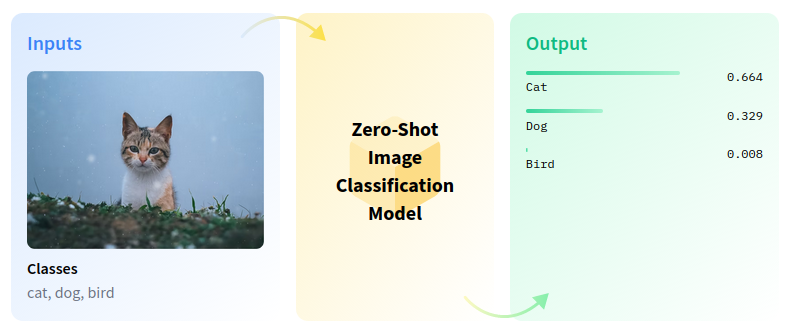

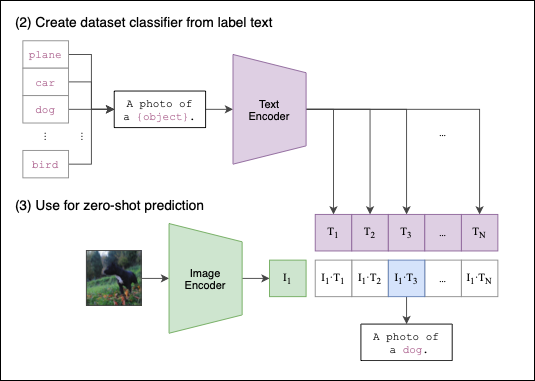

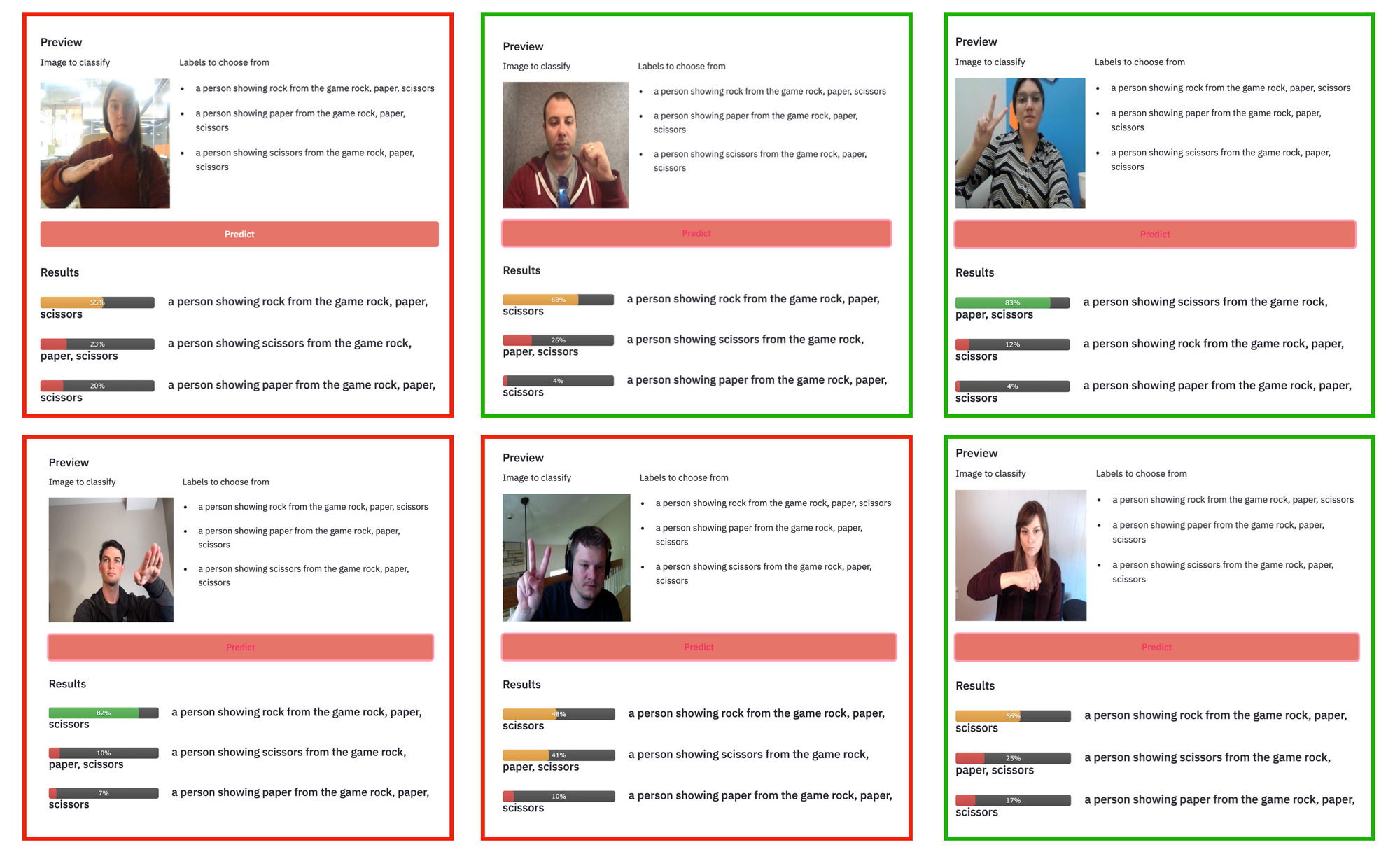

Zero-shot Image Classification with OpenAI CLIP and OpenVINO™ — OpenVINO™ documentationCopy to clipboardCopy to clipboardCopy to clipboardCopy to clipboardCopy to clipboardCopy to clipboardCopy to clipboardCopy to clipboardCopy to clipboardCopy to ...

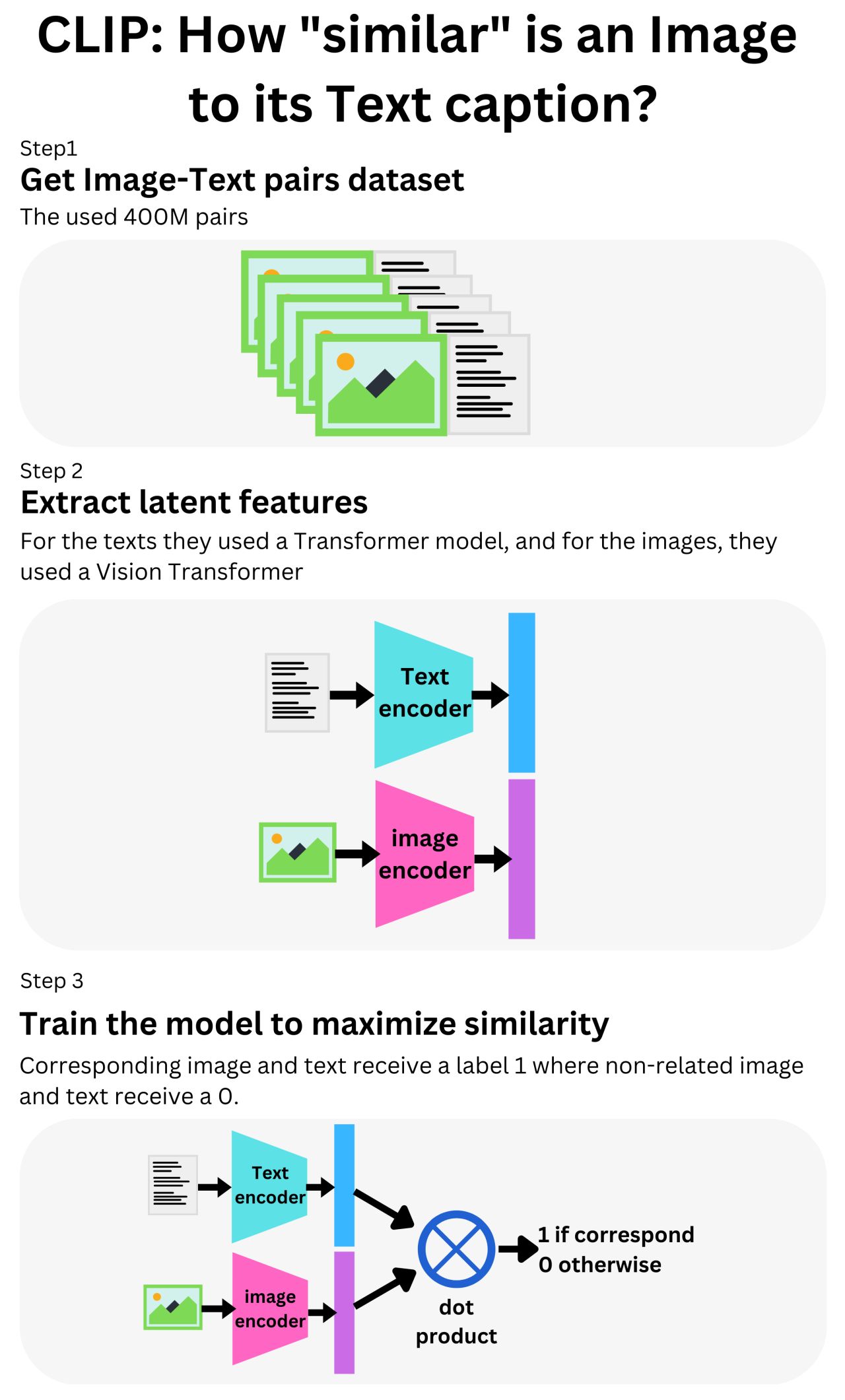

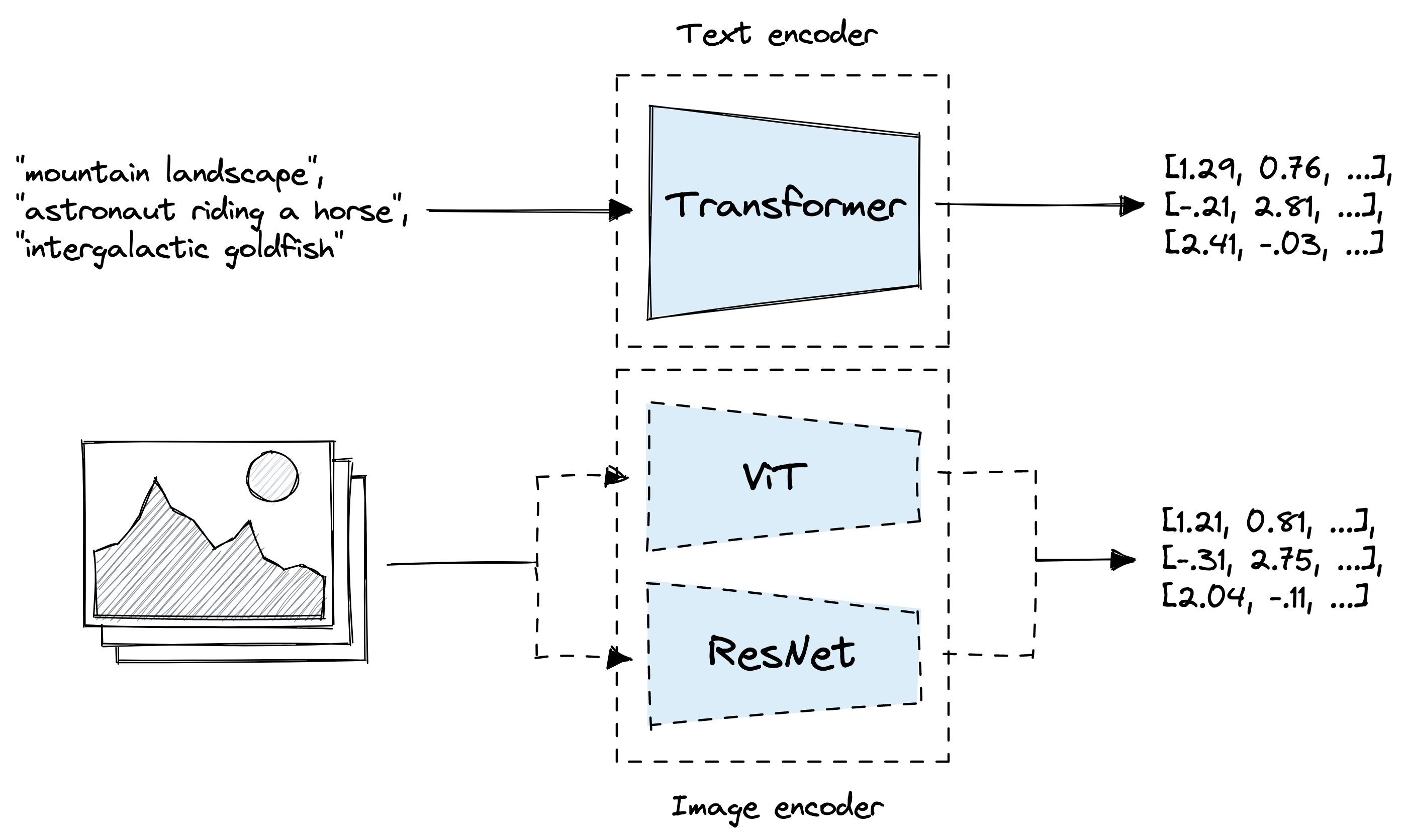

GitHub - openai/CLIP: CLIP (Contrastive Language-Image Pretraining), Predict the most relevant text snippet given an image

OpenAI's unCLIP Text-to-Image System Leverages Contrastive and Diffusion Models to Achieve SOTA Performance | Synced

Nick Davidov — e/acc on X: "Microsoft acquiring 49% in OpenAI is just a step in Paperclips plot to take over the planet https://t.co/T6WwFUSpTj" / X

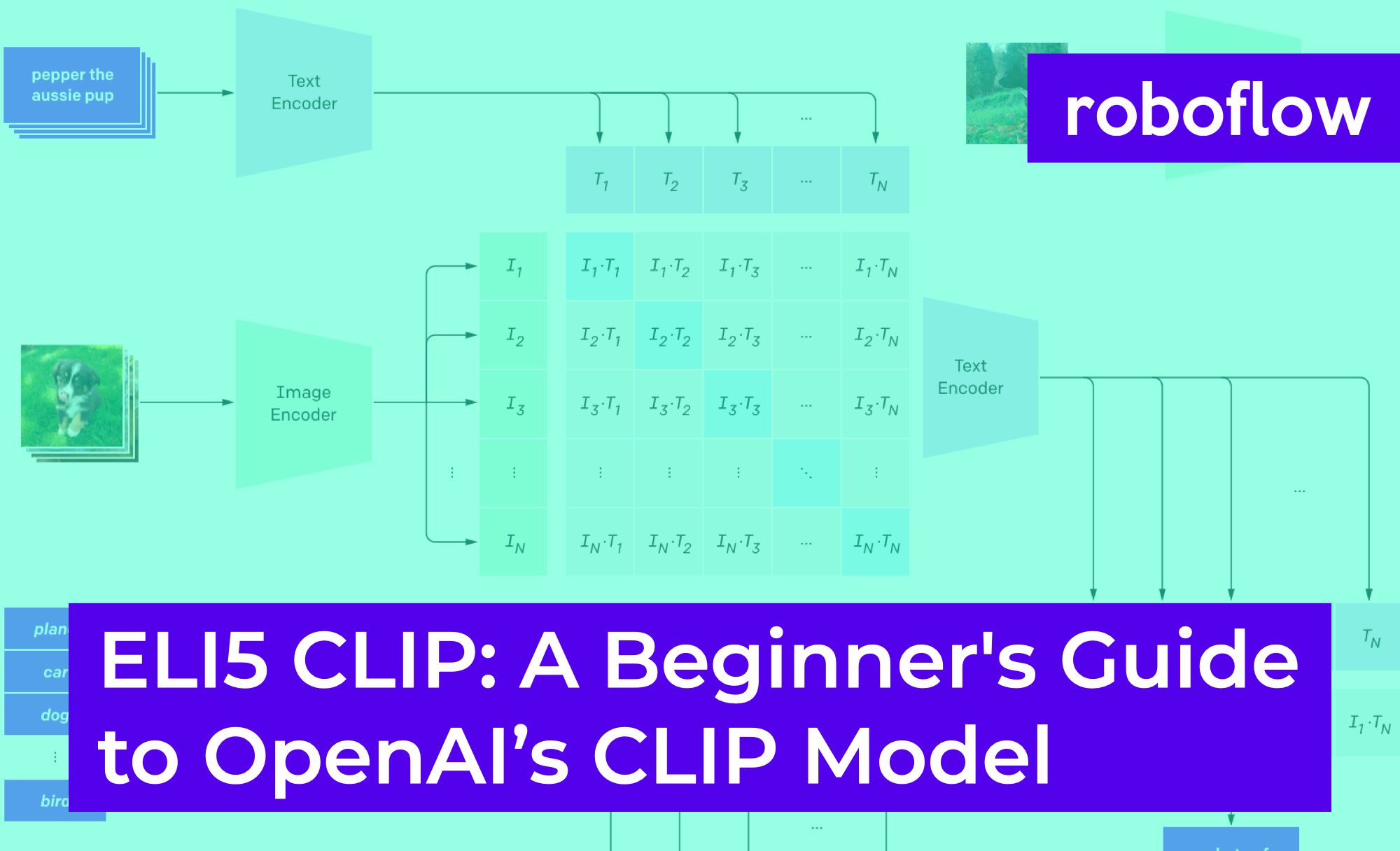

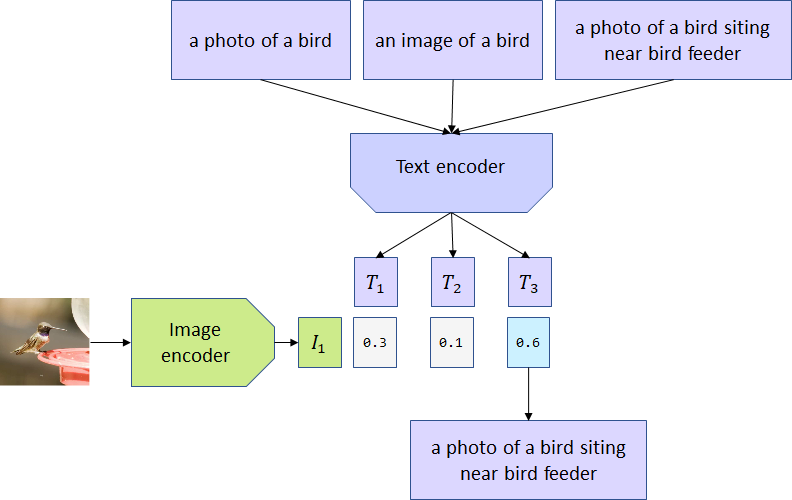

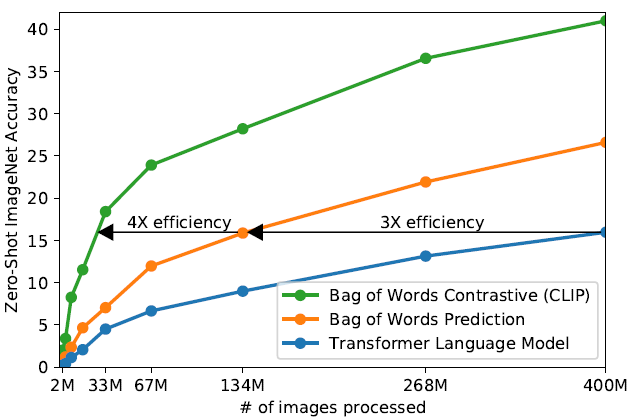

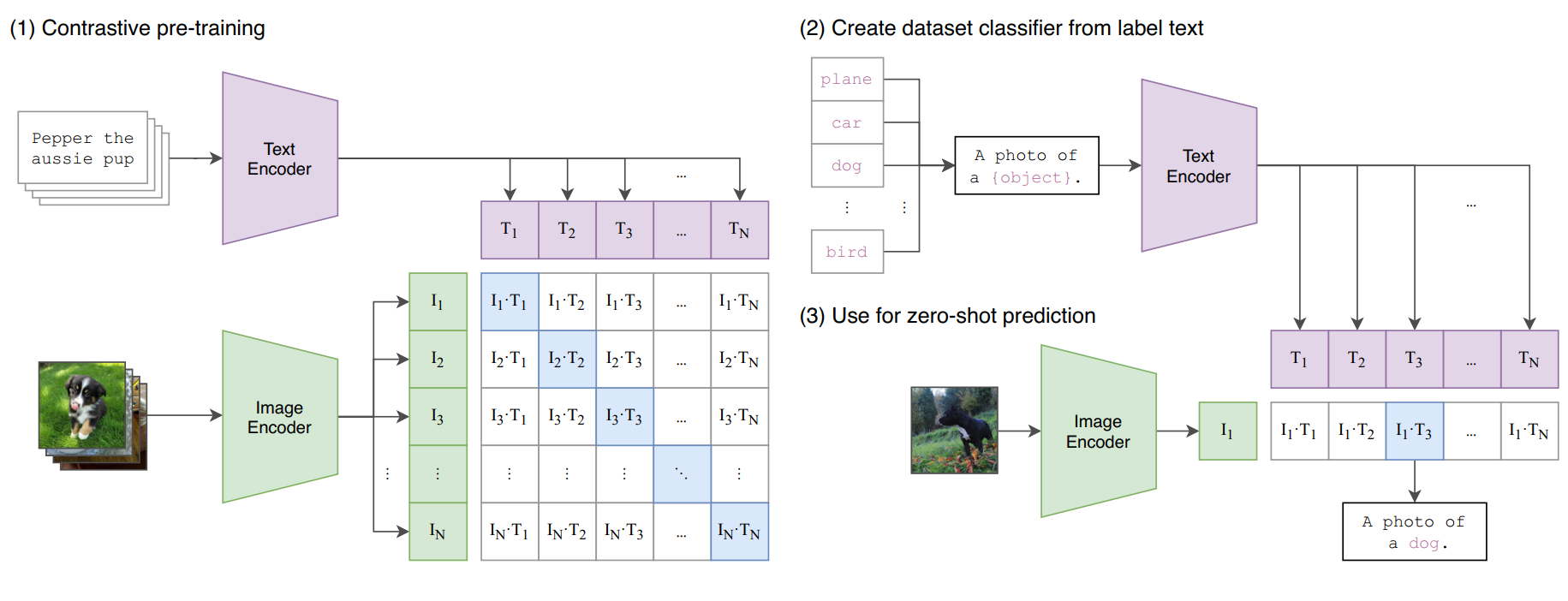

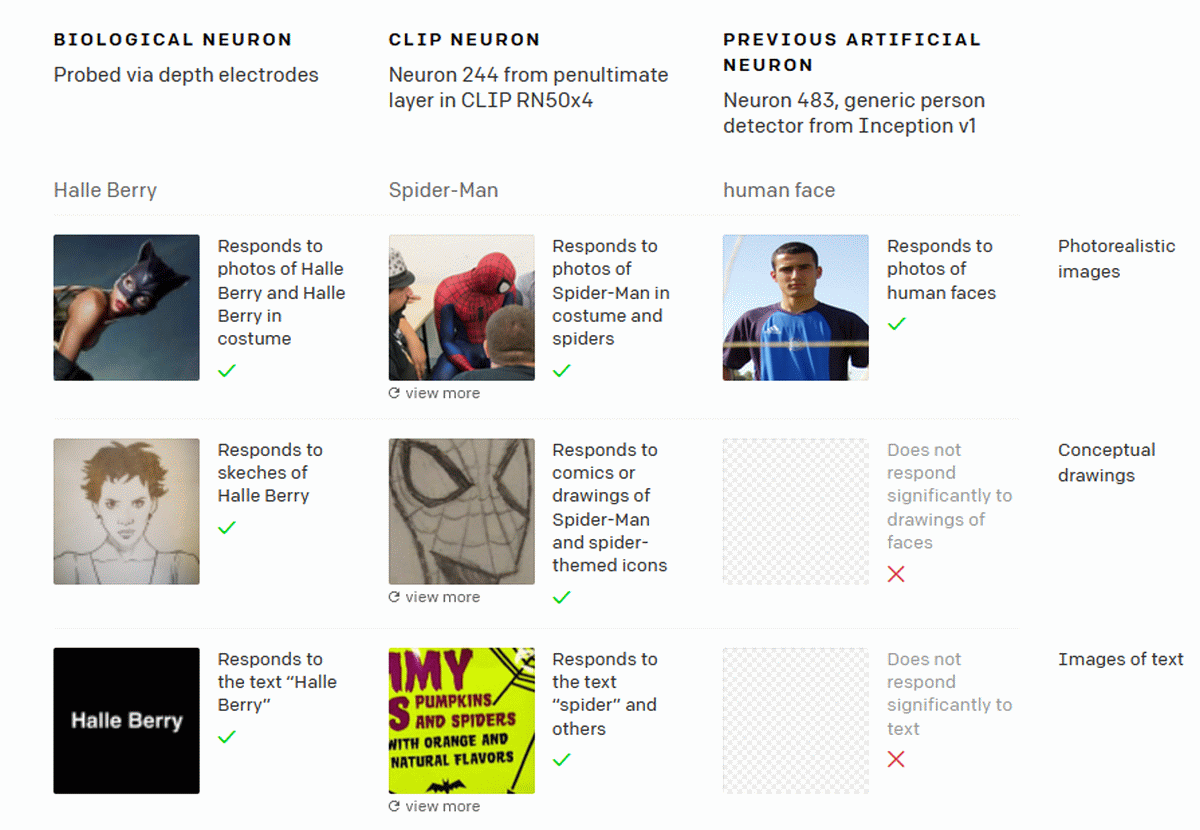

![MultiModal] CLIP (Learning transferable visual models from natural language supervision) MultiModal] CLIP (Learning transferable visual models from natural language supervision)](https://velog.velcdn.com/images/ji1kang/post/33829863-1ac1-49f0-bfe3-f115b467f9b0/image.jpg)